· Technology · 5 min read

Apple Intelligence - AI for the Rest of Us

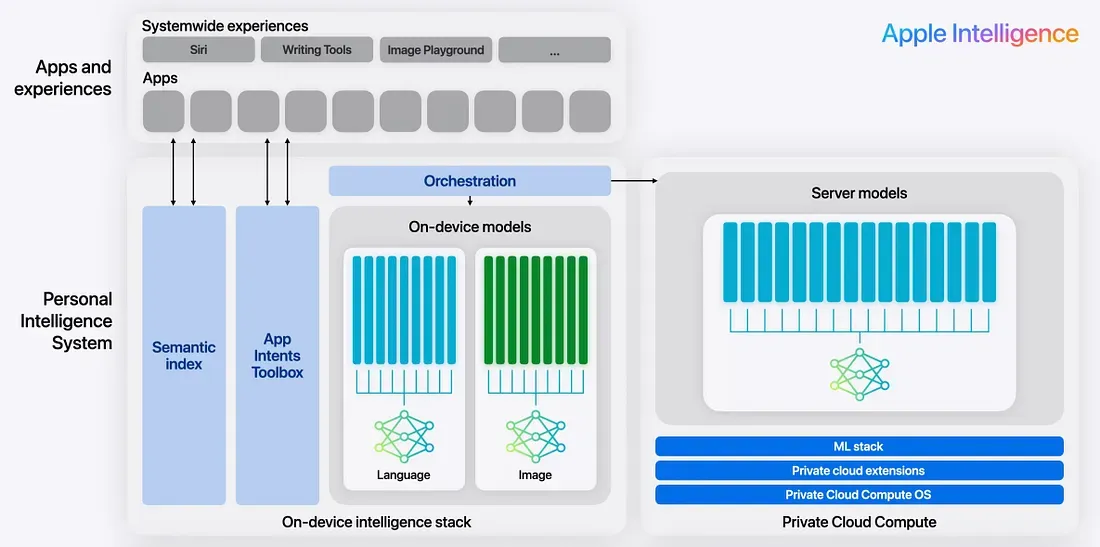

Discover how Apple Intelligence is making AI personal, private, and offline-capable. Learn about Apple's on-device Foundation Models, Private Cloud Compute, and the Core ML ecosystem.

Apple Intelligence: AI for the Rest of Us

Artificial intelligence is already rewriting the rules of the B2B world. Enterprises are racing to automate workflows, optimize supply chains, and replace entire classes of white-collar tasks. Yet in the consumer space, the long-predicted “AI apocalypse” hasn’t really arrived—at least not in the way many expected.

Instead of dramatic takeovers, consumers mostly see AI identifier apps, novelty chatbots, and cloud-dependent assistants that feel powerful one moment and untrustworthy the next.

This is where Apple takes a very different path.

With Apple Intelligence, Apple is positioning AI not as a flashy feature, but as infrastructure—quietly embedded into devices people already use every day. The goal is not “AI everywhere,” but AI for the rest of us: personal, private, offline-capable, and deeply integrated with apps and the operating system.

AI in Consumer Devices: A Shift in Philosophy

Consumer AI has historically been cloud-first:

- Data is uploaded.

- Models run remotely.

- Results are streamed back.

Apple Intelligence flips that model.

The focus is on on-device intelligence, where AI:

- Understands your preferences

- Adapts continuously to your behavior

- Nudges you toward safer, better decisions

- Works even when you’re offline

- Never treats your personal data as a product

This creates a new category of consumer AI—one that is aware, contextual, and deeply personal, without being invasive.

Aware, Adaptive, and Personal

At its core, Apple Intelligence is designed to be:

Aware of Your Preferences

Your device learns how you write, plan, travel, and play. Over time, AI adapts to:

- Your tone and style

- Your recurring habits

- Your preferred apps and workflows

Continuously Fine-Tuned With Your Data

Instead of centralizing learning in the cloud, personalization happens locally. The model evolves with you, not with aggregated user profiles.

Nudging You to Safety

Built-in guardrails ensure AI interactions avoid unsafe, misleading, or harmful outputs—without requiring constant user oversight.

Privacy-First, by Design

Apple Intelligence inherits Apple’s long-standing privacy philosophy:

- Data minimization

- On-device inference

- Explicit user opt-in

Users must enable Apple Intelligence, after which the model is downloaded directly to the device. This is a critical design choice: intelligence lives with the user, not somewhere else.

For tasks that exceed on-device capabilities, Apple introduces Private Cloud Compute, a platform that:

- Runs Apple-designed models

- Uses ephemeral computation

- Never stores personal data

- Maintains the same privacy guarantees as local execution

The Synergy: On-Device Models × Apps × Corporate Models

One of the most powerful ideas behind Apple Intelligence is model synergy:

- On-device foundation models handle personalization, context, and offline tasks.

- Apps provide domain-specific logic, UI, and workflows.

- Corporate or cloud models can be selectively invoked—without exposing raw personal data.

This layered approach allows developers to build intelligent apps without choosing between capability and privacy.

Foundation Models: The Core of Apple Intelligence

Apple exposes its generative capabilities through Foundation Models, a new framework designed for safe, controllable AI inside apps.

Model Overview

- ~3B parameters

- Multilingual

- Multimodal

- Optimized for on-device execution

- Integrated with Private Cloud Compute

Core Capabilities

Foundation Models support a wide range of generative and understanding tasks:

- Instruction following

- Tool calling (interacting with system APIs like HealthKit, Contacts, and app-defined tools)

- Guardrails and safety enforcement

- Token usage measurement

- Text generation and understanding

Supported use cases include:

- Summarization

- Entity extraction

- Text refinement and editing

- Classification and judgment

- Dialog for games

- Creative content generation

What the On-Device Model Can Do

When designing features, it helps to understand where the model shines.

Supported Capabilities

| Task | Example Prompt |

|---|---|

| Summarize | “Summarize this article.” |

| Extract entities | “List the people and places mentioned in this text.” |

| Understand text | “What happens to the dog in this story?” |

| Refine text | “Change this story to be in second person.” |

| Classify | “Is this text relevant to the topic ‘Swift’?” |

| Creative writing | “Generate a short bedtime story about a fox.” |

| Tag generation | “Provide two tags for this text.” |

| Game dialog | “Respond as a friendly inn keeper.” |

Capabilities to Avoid

The on-device language model is not a general-purpose reasoning engine. It is intentionally constrained.

| Capability | Example Prompt |

|---|---|

| Basic math | “How many b’s are there in bagel?” |

| Code generation | “Generate a Swift navigation list.” |

| Complex reasoning | “If I’m facing Canada, what direction is Texas?” |

These limitations are not weaknesses—they are design boundaries that improve reliability, performance, and safety.

Prompt Engineering: Designing for Precision

Apple encourages task-oriented prompting, where clarity beats cleverness.

Key Principles

- Be explicit about requirements

- Keep prompts scoped to a single task

- Provide structure and examples when possible

Instructions and System Prompts

Instructions act as higher-order controls that define:

The model’s role

- “You are a mentor.”

- “You are a movie critic.”

The task

- “Help extract calendar events.”

Style preferences

- “Respond as briefly as possible.”

Safety constraints

- “Respond with ‘I can’t help with that’ if asked to do something dangerous.”

Well-designed instructions dramatically improve consistency and trustworthiness.

Context Size and Guided Generation

Apple Intelligence supports guided generation, allowing developers to:

- Convert raw text into structured responses

- Enforce schemas

- Reduce hallucinations

With GenerationOptions, developers can:

- Control temperature

- Balance creativity vs. determinism

- Optimize output for specific UX needs

The Foundation Models Adapter further enables seamless integration between apps and Apple’s generative stack.

Real-World Use Cases

Apple Intelligence unlocks practical, consumer-focused experiences:

- Dynamic gameplay with adaptive NPC dialog

- Trip planning based on personal photos and memories

- Automatic tagging of notes, images, and content

- Context-aware recommendations without surveillance

These are not futuristic demos—they are features that fit naturally into everyday apps.

Core ML: The Broader ML Ecosystem

Alongside Foundation Models, Core ML remains the backbone of Apple’s on-device ML strategy.

Core ML Capabilities

- Vision

- Natural Language Processing

- Speech recognition

- Sound analysis

Core ML leverages:

- Metal for GPU acceleration

- Optimized neural engines across Apple silicon

Apple provides a growing catalog of pre-trained models, plus tools for:

- Model conversion

- Personalization

- On-device fine-tuning

This makes Core ML ideal for workloads that require:

- Deterministic behavior

- High performance

- Custom architectures

Apple’s Bet on Consumer AI

Apple Intelligence is not trying to win the AI arms race by being the biggest or loudest model.

Instead, Apple is betting on:

- Trust over novelty

- Integration over abstraction

- Privacy over data extraction

- Practical intelligence over artificial generality

In doing so, Apple may quietly redefine what consumer AI looks like—not as something users interact with, but something that simply works for them.

AI for the rest of us.